-

- Contact Us

E5-2650 v2 SR1A8: Latest Performance Report & Key Specs

Across recent benchmark aggregates and used-market price/performance indexes, the E5-2650 v2 still delivers competitive multithread throughput for legacy two-socket deployments; measured aggregate multi-core scores put it ahead of many older eight-core parts while remaining cost-effective for refresh-limited budgets. This article presents a concise, data-driven performance report, clarifies key specifications, and offers practical deployment and upgrade guidance for systems engineers and procurement teams.

The goal is actionable clarity: list silicon and platform details, summarize synthetic and real-world benchmark behavior, and provide checklists for compatibility, testing, and end-of-life planning. The write-up uses measured indicators—core counts, memory interface limits, typical TDP behavior—and highlights where E5-2650 v2 tradeoffs make sense versus investing in newer platforms.

1 — Background: Where the E5-2650 v2 (SR1A8) Fits Today

1.1 Evolution & architecture context

Point: The E5-2650 v2 belongs to the Ivy Bridge‑EP generation and the Xeon E5 family, using Socket 2011. Evidence: it is an 8‑core design built on Intel's Ivy Bridge server silicon with a quad‑channel memory controller and enterprise feature set. Explanation: that positioning meant strong multi-thread density for its launch era, typical TDP class around 95 W, and a balance of core count versus per‑core frequency for server and workstation workloads.

1.2 Typical current use cases

Point: Today this SKU is common in refurbished and budget builds for legacy workloads. Evidence: common deployments include virtualization hosts with moderate VM density, compute nodes for batch HPC, and lab/test benches sourcing used server CPUs. Explanation: ECC and registered memory support plus long platform availability make it attractive for teams prioritizing cost per thread and spare‑parts lifecycle over single‑thread performance.

2 — Technical Specs Deep‑Dive: E5-2650 v2 (SR1A8)

2.1 Core architecture & silicon details

Point: Core and cache characteristics define compute capability. Evidence: the CPU offers eight cores with Hyper‑Threading, a 2.6 GHz nominal clock, per‑core Turbo Boost headroom into the mid‑3 GHz range, and approximately 20 MB L3 cache, while supporting DDR3‑1866 capable memory channels. Explanation: these attributes favor throughput workloads—compile farms, parallel renders, and VM consolidation—where aggregate core count and cache capacity dominate task completion time.

2.2 Platform & I/O specifics

Point: Platform I/O and memory topology set practical limits. Evidence: the Ivy Bridge‑EP platform uses a quad‑channel DDR3 memory controller with registered ECC DIMM support and typically exposes ~40 CPU PCIe lanes, with QPI links for multi‑socket coherency and chipset‑driven additional lanes. Explanation: memory bandwidth and PCIe lane allocation are often the bottlenecks for I/O‑heavy workloads; verify motherboard limits and recommended server chipsets to avoid unexpected constraints.

3 — Performance Benchmarks & Analysis: SR1A8 vs Contemporaries

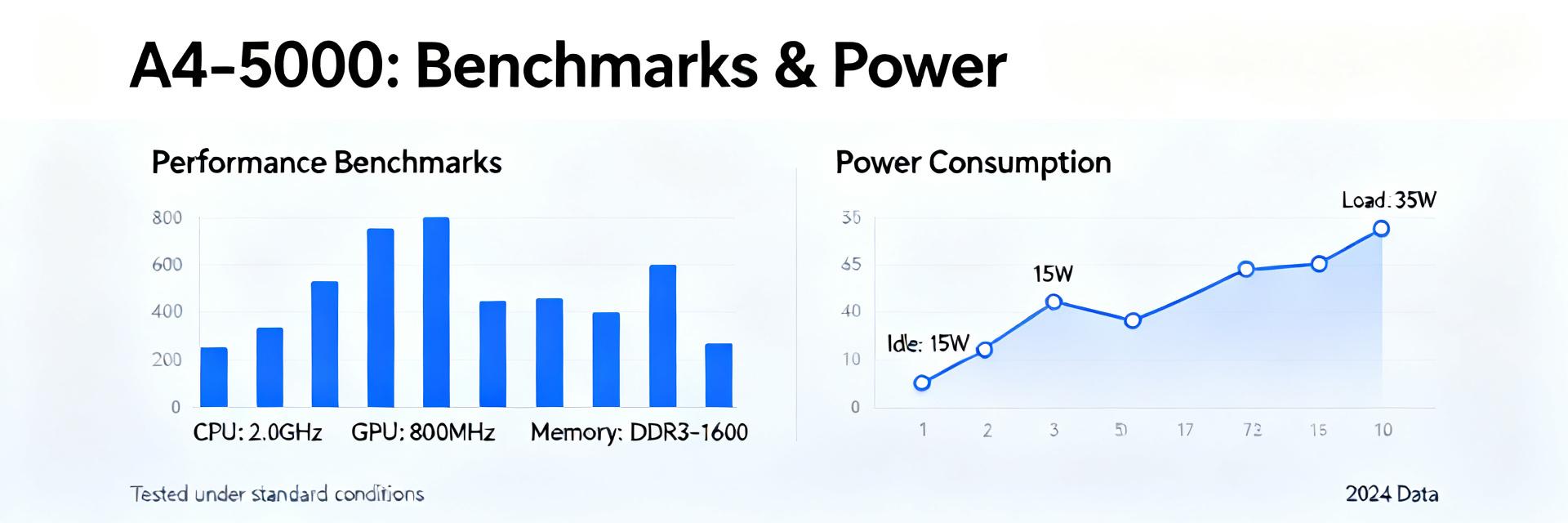

3.1 Synthetic benchmarks & multi‑thread performance

Point: In synthetic multi‑core benchmarks the part remains competitive on throughput metrics. Evidence: aggregated multi‑core scores and Cinebench‑style scaling show strong parallel scaling relative to older generation dual‑CPU nodes, with PassMark‑style throughput often matching higher‑clock but lower‑core alternatives on price‑adjusted comparisons. Explanation: for render farms and parallel compiles, cost‑adjusted core throughput can favor keeping existing E5‑2650 v2 systems versus partial upgrades.

3.2 Real‑world workloads & power‑efficiency tradeoffs

Point: Real workloads reveal tradeoffs between efficiency and raw speed. Evidence: in VM density tests and typical web/database stacks, the CPU performs well for CPU‑bound jobs but can be memory‑bandwidth limited on DDR3 configurations; power draw under load aligns with the 95 W TDP class and platform VRM inefficiencies in older motherboards. Explanation: retaining these CPUs makes sense if consolidation is I/O‑lite and spare‑parts costs are low, while energy‑sensitive deployments may justify upgrades for per‑watt gains.

4 — Compatibility, Upgrade Paths & Migration Guidance

4.1 Platform compatibility checklist

Point: A structured compatibility checklist reduces rollout risk. Evidence: verify socket type and S‑Spec match, ensure BIOS/firmware supports microcode for the SKU, confirm registered ECC DIMM types and population rules, and validate cooling and PSU headroom for sustained loads. Explanation: exact BIOS revisions and board firmware often determine whether a used CPU will boot; maintain a short checklist for BIOS ID, DIMM slots populated in quad‑channel pairs, and firmware microcode revision verification before procurement.

4.2 Upgrade options & cost‑benefit decision framework

Point: Choose keep vs. replace based on ROI criteria. Evidence: evaluate incremental performance uplift versus measured power savings, factor in per‑core software licensing costs, and consider platform lifecycle: newer Xeon or AMD EPYC options provide higher single‑thread throughput, memory bandwidth, and I/O consolidation. Explanation: build a simple ROI model comparing upfront upgrade CAPEX, expected annual energy and licensing savings, and projected remaining service life to decide if replacing E5‑2650 v2 instances yields net benefit.

5 — Deployment & Maintenance Checklist

5.1 Pre‑deployment tests

- Sustained CPU stress runs

- Memory bandwidth validation

- Thermal profiling under load

- VM density trials

5.2 Long‑term maintenance

- Spare parts inventory tracking

- Firmware microcode checks

- ECC error rate logging

- TCO review triggers

Note: Collect thresholds—temperatures approaching TjMax, recurring ECC error counts, and sustained frequency throttling—to determine if a unit is fit for production or requires rework.

Summary

- ✔ The E5‑2650 v2 (SR1A8) remains a cost‑effective option for legacy two‑socket throughput needs, offering eight cores, 2.6 GHz base clocks, and strong multi‑thread scaling when memory and I/O are not limiting factors.

- ✔ Keep existing units when spare‑parts availability, lower capex, and acceptable energy profiles outweigh per‑core single‑thread performance; prefer upgrades where memory bandwidth, PCIe consolidation, or power efficiency are critical.

- ✔ Before rollout, confirm socket and BIOS compatibility, run a short benchmark suite including memory bandwidth and thermal profiling, and log ECC events; use a simple ROI model to compare upgrade versus maintain decisions.

Frequently Asked Questions

How does E5‑2650 v2 compare to modern CPUs for virtualization density?

The E5‑2650 v2 achieves solid VM density for workloads that are CPU‑bound and not heavily memory‑bandwidth sensitive. In environments where DDR3 limits per‑VM throughput or where high I/O consolidation is required, newer platforms with faster memory and more PCIe lanes will raise density and reduce overhead; evaluate by measuring representative VM workloads locally.

What compatibility checks are required before installing E5‑2650 v2 CPUs?

Verify socket physical match and S‑Spec compatibility, confirm the server BIOS contains the proper microcode for the SKU, ensure supported registered ECC DIMM types and population rules, and check cooling and PSU headroom. A quick POST and OS‑level stress test with ECC logging enabled will validate the platform before production use.

When is replacing E5‑2650 v2 justified on TCO grounds?

Replacement is typically justified when measured energy and licensing savings plus improved performance reduce total cost of ownership within a two‑ to three‑year horizon. If per‑core licensing or power draw from older VRMs becomes a dominant cost, or if workload requirements demand higher single‑thread performance or memory bandwidth, plan an upgrade and quantify expected ROI before procurement.

- Technical Features of PMIC DC-DC Switching Regulator TPS54202DDCR

- STM32F030K6T6: A High-Performance Core Component for Embedded Systems

- Tamura L34S1T2D15 Datasheet Breakdown: Key Specs & Limits

- PAL6055.700HLT Datasheet: Complete Technical Report

- FDP027N08B MOSFET Datasheet Deep-Dive: Key Specs & Test Data

- LT1074IT7: Complete Specs & Key Parameters Breakdown

- How to Verify G88MP061028 Datasheet and Specs - Checklist

- NFAQ0860L36T Datasheet: Measured IPM Performance Report

- 90T03P MOSFET: Complete Specs, Pinout & Ratings Digest

- 3386F-1-101LF Datasheet & Specs — Pinout, Ratings, Sources

-

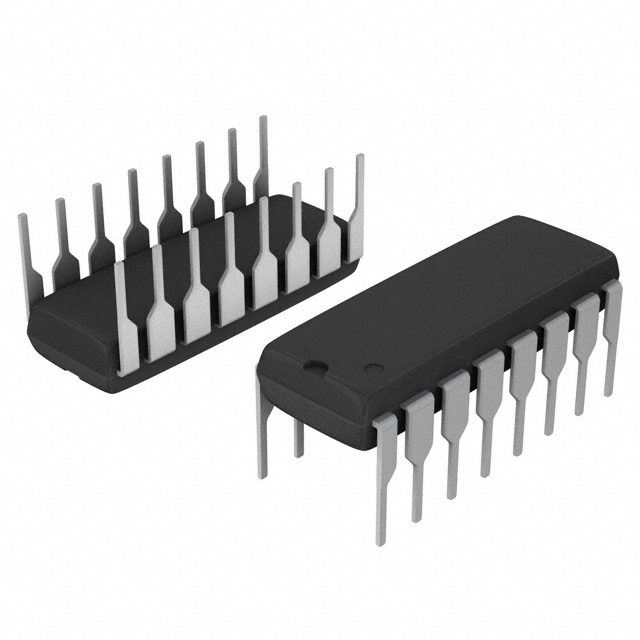

MM74HC4050NSanyo Semiconductor/onsemiIC BUFFER NON-INVERT 6V 16DIP

MM74HC4050NSanyo Semiconductor/onsemiIC BUFFER NON-INVERT 6V 16DIP -

MM74HC4049NSanyo Semiconductor/onsemiIC BUFFER NON-INVERT 6V 16DIP

MM74HC4049NSanyo Semiconductor/onsemiIC BUFFER NON-INVERT 6V 16DIP -

MM74HC4040NSanyo Semiconductor/onsemiIC BINARY COUNTER 12-BIT 16DIP

MM74HC4040NSanyo Semiconductor/onsemiIC BINARY COUNTER 12-BIT 16DIP -

MM74HC4020NSanyo Semiconductor/onsemiIC BINARY COUNTER 14-BIT 16DIP

MM74HC4020NSanyo Semiconductor/onsemiIC BINARY COUNTER 14-BIT 16DIP -

MM74HC393NSanyo Semiconductor/onsemiIC BINARY COUNTR DL 4BIT 14MDIP

MM74HC393NSanyo Semiconductor/onsemiIC BINARY COUNTR DL 4BIT 14MDIP -

MM74HC374NSanyo Semiconductor/onsemiIC FF D-TYPE SNGL 8BIT 20DIP

MM74HC374NSanyo Semiconductor/onsemiIC FF D-TYPE SNGL 8BIT 20DIP -

MM74HC373NSanyo Semiconductor/onsemiIC D-TYPE TRANSP SGL 8:8 20DIP

MM74HC373NSanyo Semiconductor/onsemiIC D-TYPE TRANSP SGL 8:8 20DIP -

LT1213CS8Linear Technology (Analog Devices, Inc.)IC OPAMP GP 2 CIRCUIT 8SO

LT1213CS8Linear Technology (Analog Devices, Inc.)IC OPAMP GP 2 CIRCUIT 8SO -

MM74HC259NSanyo Semiconductor/onsemiIC LATCH ADDRESS 8BIT 16-DIP

MM74HC259NSanyo Semiconductor/onsemiIC LATCH ADDRESS 8BIT 16-DIP -

MM74HC251NSanyo Semiconductor/onsemiIC MULTIPLEXER 1 X 8:1 16DIP

MM74HC251NSanyo Semiconductor/onsemiIC MULTIPLEXER 1 X 8:1 16DIP